- Home

- ServicesOur Services

Strategy

Get a comprehensive community strategy

Technology

Have a best-in-class community experience

Intelligence

Measure and prove your value

Training

Develop a world-class community team

Subscribe by email

Get regular insights by email

- ResourcesResources

Blog

Browse our latest insights

Webinars

Access free lessons

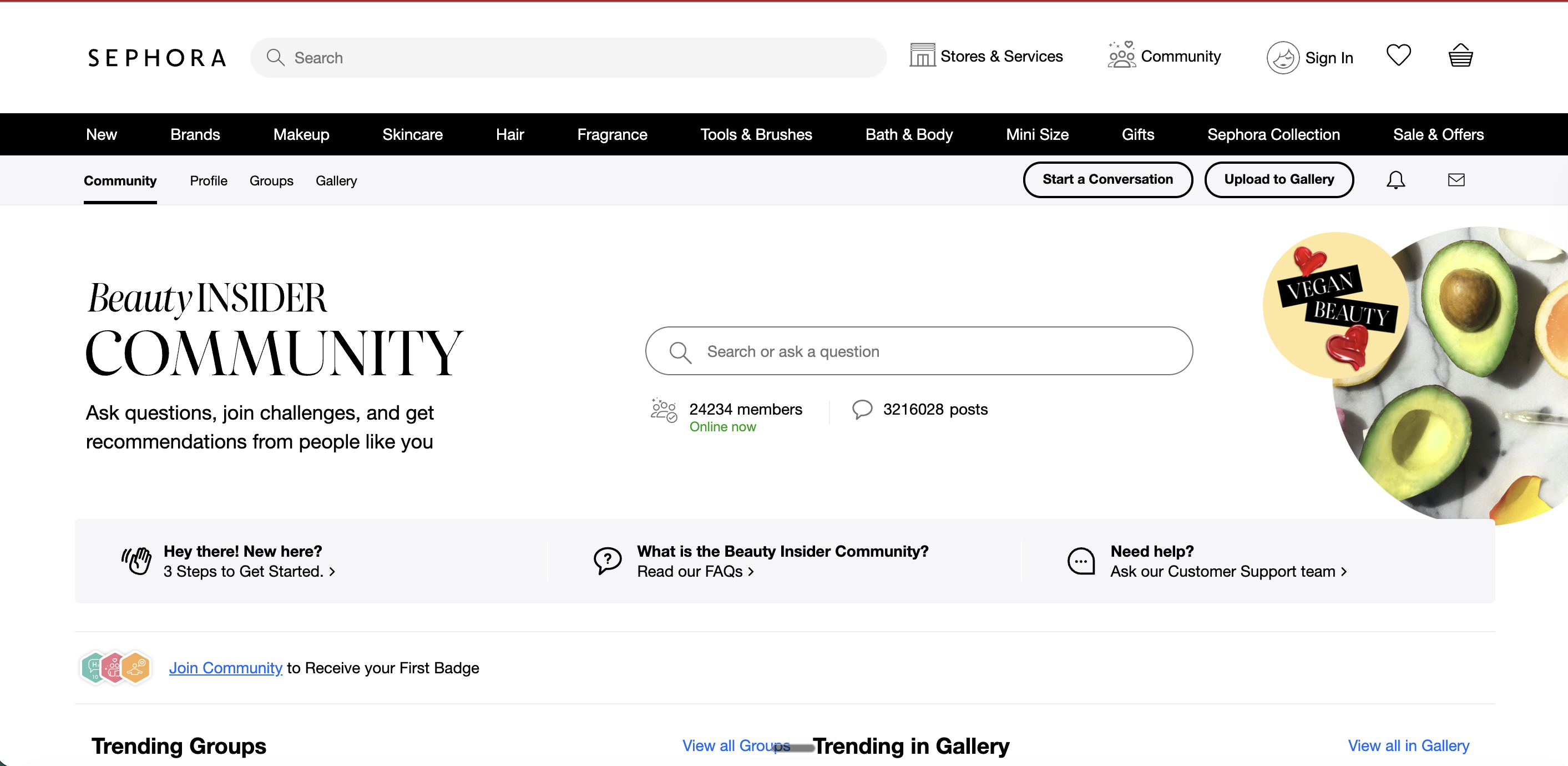

Browse Great Examples

Get inspiration from top communities

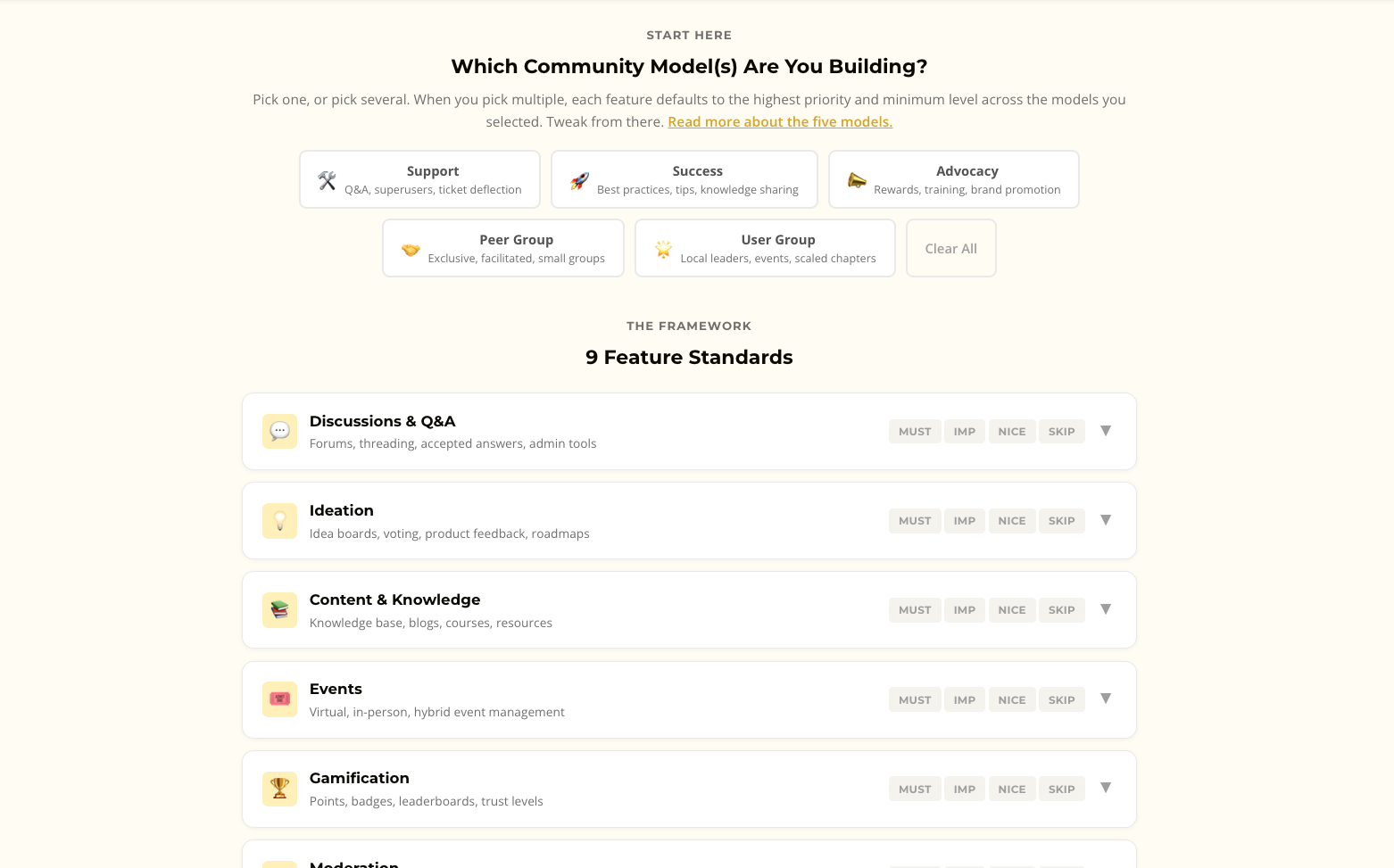

Buzz Report - Evaluation Of The Top 6 Platforms

Discover the pros and cons of the top six community platforms

Books

Published by us and recommendations

Subscribe by email

Get regular insights by email

best practicesCreating Successful Community Strategies

Learn how to create a successful community strategy from scratch

Calculate ROI

Discover how to prove ROI

Superuser Programs

Nurture top members

Beginners' Guide To Community Management

Get quickly up to speed on the basics.

Strategic Community Management

Learn how to approach your community strategically.

- Case Studies

- Insights

- Login